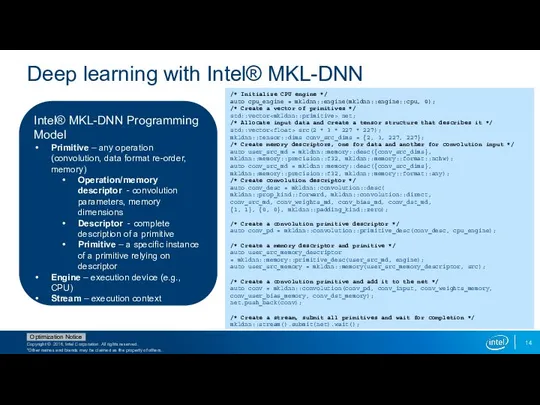

Deep learning with Intel® MKL-DNN

Intel® MKL-DNN Programming Model

Primitive – any operation

(convolution, data format re-order, memory)

Operation/memory descriptor - convolution parameters, memory dimensions

Descriptor - complete description of a primitive

Primitive – a specific instance of a primitive relying on descriptor

Engine – execution device (e.g., CPU)

Stream – execution context

/* Initialize CPU engine */

auto cpu_engine = mkldnn::engine(mkldnn::engine::cpu, 0);

/* Create a vector of primitives */

std::vector net;

/* Allocate input data and create a tensor structure that describes it */

std::vector src(2 * 3 * 227 * 227);

mkldnn::tensor::dims conv_src_dims = {2, 3, 227, 227};

/* Create memory descriptors, one for data and another for convolution input */

auto user_src_md = mkldnn::memory::desc({conv_src_dims},

mkldnn::memory::precision::f32, mkldnn::memory::format::nchw);

auto conv_src_md = mkldnn::memory::desc({conv_src_dims},

mkldnn::memory::precision::f32, mkldnn::memory::format::any);

/* Create convolution descriptor */

auto conv_desc = mkldnn::convolution::desc(

mkldnn::prop_kind::forward, mkldnn::convolution::direct,

conv_src_md, conv_weights_md, conv_bias_md, conv_dst_md,

{1, 1}, {0, 0}, mkldnn::padding_kind::zero);

/* Create a convolution primitive descriptor */

auto conv_pd = mkldnn::convolution::primitive_desc(conv_desc, cpu_engine);

/* Create a memory descriptor and primitive */

auto user_src_memory_descriptor

= mkldnn::memory::primitive_desc(user_src_md, engine);

auto user_src_memory = mkldnn::memory(user_src_memory_descriptor, src);

/* Create a convolution primitive and add it to the net */

auto conv = mkldnn::convolution(conv_pd, conv_input, conv_weights_memory,

conv_user_bias_memory, conv_dst_memory);

net.push_back(conv);

/* Create a stream, submit all primitives and wait for completion */

mkldnn::stream().submit(net).wait();

ГУО «Староборисовская средняя школа Борисовского района». Команда "Феникс"

ГУО «Староборисовская средняя школа Борисовского района». Команда "Феникс" Die Vergangenheitsformen

Die Vergangenheitsformen Многокритериальная оптимизация

Многокритериальная оптимизация  Презентация "Всемирный Банк в Центральной Азии" - скачать презентации по Экономике

Презентация "Всемирный Банк в Центральной Азии" - скачать презентации по Экономике Пещерные храмы в Эллоре По теме: «Искусство Индии» Составила: учитель МХК МОУ СОШ № 131 г. Уссурийска Коляда Наталья Ивановна

Пещерные храмы в Эллоре По теме: «Искусство Индии» Составила: учитель МХК МОУ СОШ № 131 г. Уссурийска Коляда Наталья Ивановна  Экспертная технология

Экспертная технология Презентация "Саграда Фамилия" - скачать презентации по МХК

Презентация "Саграда Фамилия" - скачать презентации по МХК Ремонтно-строительные работы на территории МО «Карсунский район» в 2018 году

Ремонтно-строительные работы на территории МО «Карсунский район» в 2018 году Презентация Историческая разминка

Презентация Историческая разминка Электротехнические работы. Бытовые светильники. Электротехническая арматура

Электротехнические работы. Бытовые светильники. Электротехническая арматура Компания LANDE A.S

Компания LANDE A.S «Правовое регулирование установления инвалидности»

«Правовое регулирование установления инвалидности» генетика

генетика Роспись по дереву Городец

Роспись по дереву Городец Гостиная. Спальня. Лоджии. Прихожая

Гостиная. Спальня. Лоджии. Прихожая Цифровая схемотехника. Системы счисления, коды

Цифровая схемотехника. Системы счисления, коды История шахмат

История шахмат Können

Können Факторы производства

Факторы производства  Типы данных и переменные в социологии

Типы данных и переменные в социологии  НО «Фонд капитального ремонта многоквартирных домов Амурской области»

НО «Фонд капитального ремонта многоквартирных домов Амурской области» Эстер Вергеер

Эстер Вергеер Процессуальная реформа: обзор главных изменений в АПК, внесенных Федеральным законом от 28.11.2018 № 451-ФЗ

Процессуальная реформа: обзор главных изменений в АПК, внесенных Федеральным законом от 28.11.2018 № 451-ФЗ Обследование больных с патологией печени и желчного пузыря

Обследование больных с патологией печени и желчного пузыря Модели жизненного цикла программного обеспечения

Модели жизненного цикла программного обеспечения Глобальные системы позиционирования

Глобальные системы позиционирования РОЛЬ МОРАЛЬНО-ПСИХОЛОГИЧЕСКОГО ФАКТОРА В УСЛОВИЯХ СОВРЕМЕННОЙ ВОЙНЫ

РОЛЬ МОРАЛЬНО-ПСИХОЛОГИЧЕСКОГО ФАКТОРА В УСЛОВИЯХ СОВРЕМЕННОЙ ВОЙНЫ Петухова Елена Анатольевна, учитель, МОУ СОШ №95 Занятие по теме: «Вышивка крестом с применением ИКТ

Петухова Елена Анатольевна, учитель, МОУ СОШ №95 Занятие по теме: «Вышивка крестом с применением ИКТ