Слайд 2

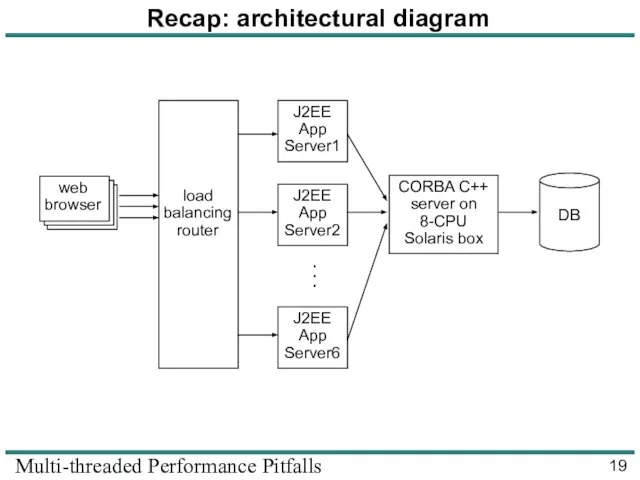

Multi-threaded Performance Pitfalls

License

Copyright © 2008 Ciaran McHale.

Permission is hereby granted, free

of charge, to any person obtaining a copy of this

training course and associated documentation files (the “Training Course"), to deal in

the Training Course without restriction, including without limitation the rights to use,

copy, modify, merge, publish, distribute, sublicense, and/or sell copies of the Training

Course, and to permit persons to whom the Training Course is furnished to do so,

subject to the following conditions:

The above copyright notice and this permission notice shall be included in all copies

or substantial portions of the Training Course.

THE TRAINING COURSE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY

KIND, EXPRESS OR IMPLIED, INCLUDING BUT NOT LIMITED TO THE

WARRANTIES OF MERCHANTABILITY, FITNESS FOR A PARTICULAR PURPOSE

AND NONINFRINGEMENT. IN NO EVENT SHALL THE AUTHORS OR

COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE,

ARISING FROM, OUT OF OR IN CONNECTION WITH THE TRAINING COURSE

OR THE USE OR OTHER DEALINGS IN THE TRAINING COURSE.

Измерение информации

Измерение информации Средства мультимедиа

Средства мультимедиа Раздел 4 Описание модели

Раздел 4 Описание модели  Основы алгоритмики. Библиотеки функций. Раздел 1. Общие правила

Основы алгоритмики. Библиотеки функций. Раздел 1. Общие правила ТЕКСТОВЫЕ ДОКУМЕНТЫ И ТЕХНОЛОГИИ ИХ СОЗДАНИЯ ОБРАБОТКА ТЕКСТОВОЙ ИНФОРМАЦИИ

ТЕКСТОВЫЕ ДОКУМЕНТЫ И ТЕХНОЛОГИИ ИХ СОЗДАНИЯ ОБРАБОТКА ТЕКСТОВОЙ ИНФОРМАЦИИ Разработка модуля формирования листа согласования электронных документов в IPS

Разработка модуля формирования листа согласования электронных документов в IPS Картирование потока создания ценности

Картирование потока создания ценности Основные понятия и определения БД

Основные понятия и определения БД Программирование на языке Паскаль

Программирование на языке Паскаль Инструменты распознования текстов и компьютерного перевода

Инструменты распознования текстов и компьютерного перевода QoS в VoIP

QoS в VoIP Дискретизация графики и видео

Дискретизация графики и видео Структура сайта по отдельным окнам

Структура сайта по отдельным окнам Регрессионный анализ

Регрессионный анализ Благотворительность в России. Контент-анализ

Благотворительность в России. Контент-анализ Программирование на языке Python

Программирование на языке Python Основы безопасности

Основы безопасности Презентация "Основные этапы и правила создания электронного письма" - скачать презентации по Информатике

Презентация "Основные этапы и правила создания электронного письма" - скачать презентации по Информатике Применение автоматного программирования во встраиваемых системах В. О. Клебан, А. А. Шалыто Санкт-Петербургский государственн

Применение автоматного программирования во встраиваемых системах В. О. Клебан, А. А. Шалыто Санкт-Петербургский государственн Эволюция платформенных архитектур информационных систем

Эволюция платформенных архитектур информационных систем ЭЛЕКТРОННАЯ ПОЧТА “Электронная почта, с одной стороны, это просто электронная замена бумажной почты, конвертов, почтальонов, мешков с письмами, а с другой – совершенно новая, замечательная возможность оперативного общения практически без границ и расст

ЭЛЕКТРОННАЯ ПОЧТА “Электронная почта, с одной стороны, это просто электронная замена бумажной почты, конвертов, почтальонов, мешков с письмами, а с другой – совершенно новая, замечательная возможность оперативного общения практически без границ и расст Компьютерная графика

Компьютерная графика Курсовая работа на тему «Разработка базы данных средствами СУБД MS Access. База данных двигателей постоянного тока»

Курсовая работа на тему «Разработка базы данных средствами СУБД MS Access. База данных двигателей постоянного тока»  1D and 2D arrays

1D and 2D arrays Урок №1

Урок №1 Программное обеспечение компьютера

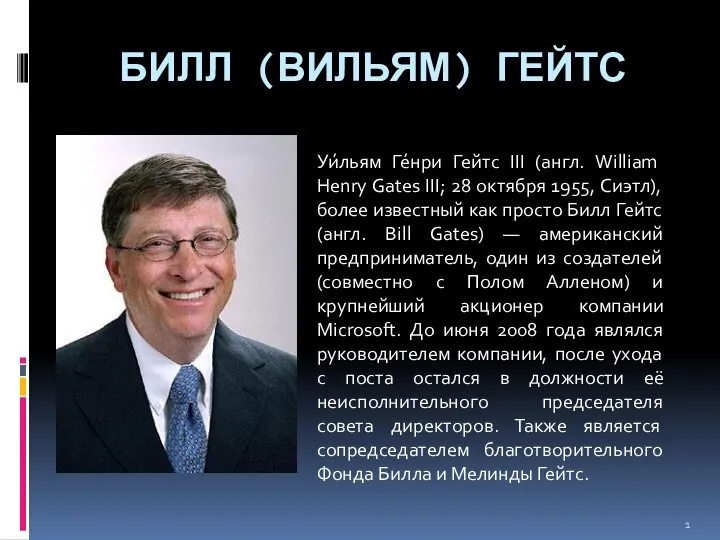

Программное обеспечение компьютера Билл (Вильям) Гейтс Уи́льям Ге́нри Гейтс III (англ. William Henry Gates III; 28 октября 1955, Сиэтл), более известный как просто Билл Гейтс (англ. Bill Gates) — американский предприниматель, один из создателей (совместно с Полом Алленом) и крупнейший ак

Билл (Вильям) Гейтс Уи́льям Ге́нри Гейтс III (англ. William Henry Gates III; 28 октября 1955, Сиэтл), более известный как просто Билл Гейтс (англ. Bill Gates) — американский предприниматель, один из создателей (совместно с Полом Алленом) и крупнейший ак Our Favorite XSS Filters/IDS and how to Attack Them

Our Favorite XSS Filters/IDS and how to Attack Them